Two pressure points are breaking AI transformations: governance built for a slower world, and a trust gap between what leaders say and what people experience. These are not separate problems — they feed each other. Most transformations that lose momentum die here.

Approval cycles, oversight committees, and annual planning were designed for change that moved in phases. AI doesn’t work that way. It reshapes tasks, roles, and decision rights continuously. Agentic systems now act without human review. Three questions are already pressing:

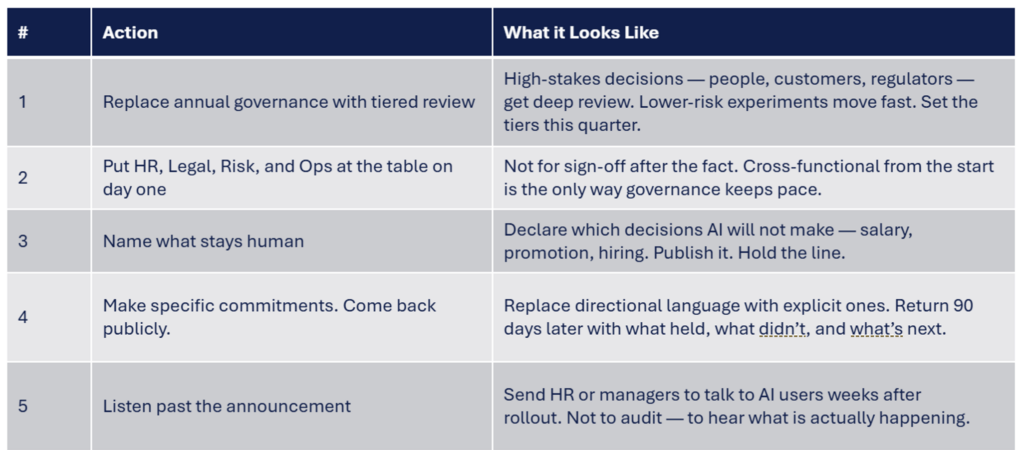

The principle is simple: AI doesn’t decide people’s salaries or careers. Humans do. Operationalizing that at speed is the harder problem. Organizations doing it well have stopped treating governance as compliance and started running it as an operating function:

Trust is slow to build and quick to collapse — and it’s tested every time leadership communication and employee experience diverge. In AI transformations, they diverge constantly. Managers are asked to lead through ambiguity they weren’t trained for. CHROs hear the same thing from their teams: give us something stable enough to commit to.

Builder.ai is the cautionary case. The company raised over $450 million on an AI platform that turned out to be largely human engineers. When that gap surfaced, the company went bankrupt. The dynamic is the same inside organizations. When what leaders say keeps diverging from what people experience, trust doesn’t erode gradually. It collapses.

Trust is rebuilt through consistency — how leaders behave when transformation gets hard, long after the announcement is made. One CHRO in the Council Advisors network sends junior HR staff to talk directly with AI users after major rollouts. Not to check compliance. To listen.

Slow governance erodes trust. Eroded trust makes governance slower. Decisions drag. Reasoning goes opaque. People fill in their own version of what’s happening — and it’s rarely charitable. Some teams stop waiting and build workarounds, which carry their own risks. The loop doesn’t need a dramatic event to close. It closes through accumulation. This is why well-resourced transformations led by capable people still lose ground.

This isn’t a process problem. It’s a leadership problem. The answer is visible, specific, and consistent leadership — past the announcement, past the deployment, past the first hard quarter.

The bottom line. The organizations finding their footing aren’t waiting for things to stabilize. They’re learning to lead without that luxury.

Sources

OneTrust 2025 AI-Ready Governance Report; S&P Global 2025 AI Survey; FT, “How culture unlocks GenAI’s true potential,” Dave Niles.

We use data collected by cookies to analyze traffic on this website. By clicking “Yes,” you agree to our use of cookies as described in our Privacy Policy.